Abstract

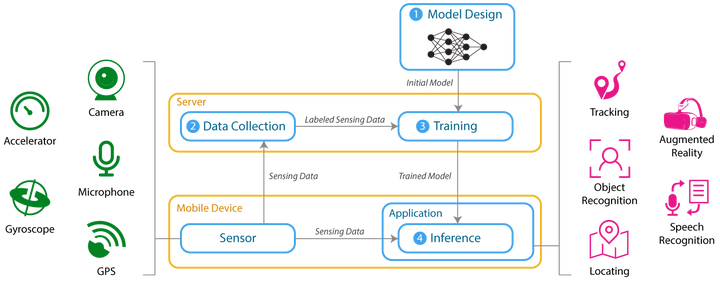

Recently, deep learning has been used to tackle mobile sensing problems, and the inference phase of deep learning is preferred to be run on mobile devices for speedy responses. However, mobile devices are resource-constrained platforms for both computation and power. Moreover, an inference task with deep learning involves tens of billions of mathematical operations and tens of millions of parameter reads. Thus, it is a critical issue to reduce the energy consumption of deep learning inference algorithms. In this article, we survey various energy reduction approaches, and classify them into three categories: the compressing neural network model, minimizing the data transfer required in computation, and offloading workloads. Moreover, we simulate and compare three techniques of model compression, by applying them to an object recognition problem.